Exploring the Emotional Depths of a 3D Short Drama

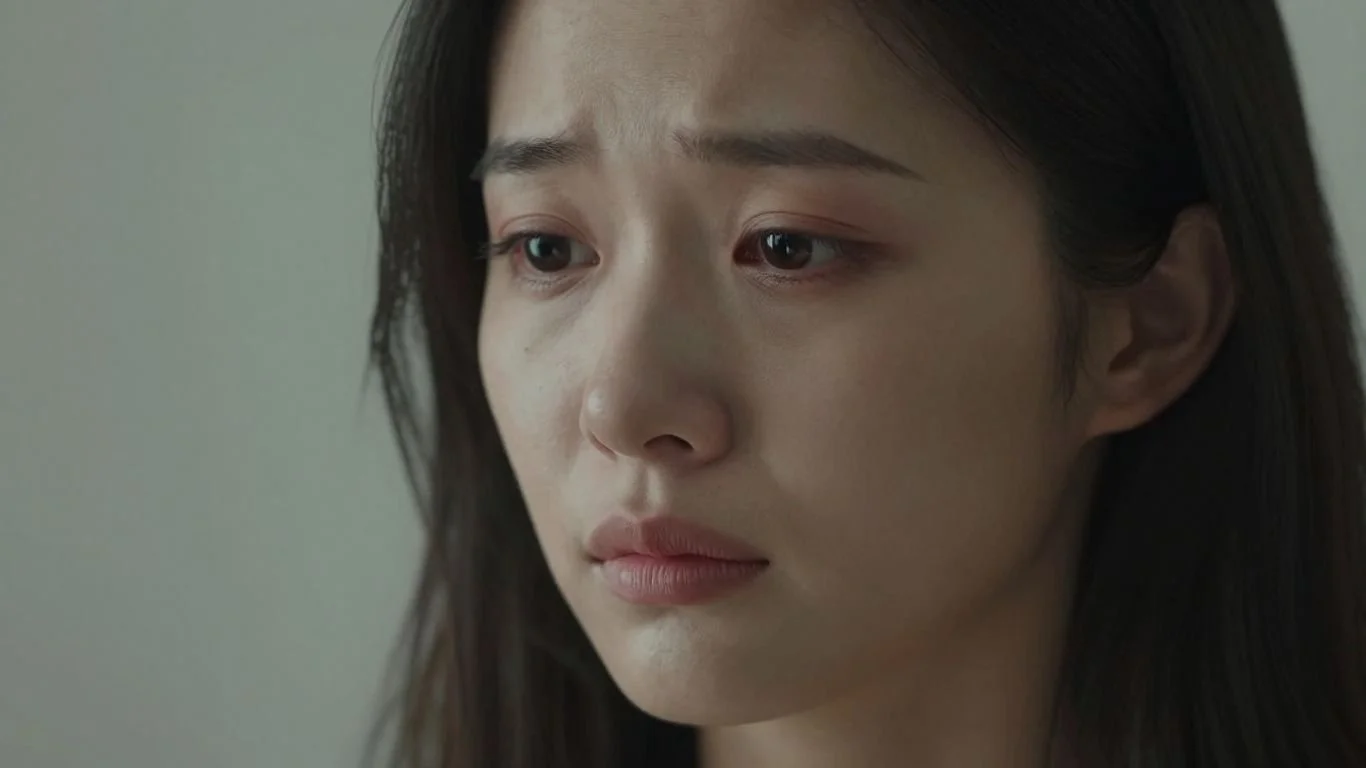

Creating believable characters in 3D short drama is a big deal, especially when you want them to feel real. It's not just about making them look good; it's about how they act and express themselves. We're looking at how computer models can generate these emotions and what people actually think and feel when they watch them, particularly in virtual reality. This whole area of 3D short drama is getting more interesting as the technology improves.

Key Takeaways

- New ways of making 3D characters show emotions are being tested. These aren't just simple movements; they aim for more complex feelings.

- When making animated characters, especially for 3D short drama, focusing on how happy or neutral they seem is a good starting point before tackling more complex emotions.

- Virtual reality (VR) is a good place to test how real these animated emotions feel. It helps measure things like how natural the character seems and if people enjoy the experience.

- Making animations that look and feel consistent, even when the character says the same thing, is important. People notice if a character's movements change too much.

- While computers can make characters move and talk, getting the emotional expression just right, especially for less intense feelings, is still a challenge for 3D short drama.

Understanding Emotional Nuances in 3D Short Drama

When we talk about 3D short dramas, especially those meant for virtual reality, it's not just about making characters look good. We really need to get into how they feel and how they show it. It's a whole spectrum, you know? Not just happy or sad, but all the shades in between.

The Spectrum of Affective States

Think about it – human emotions aren't just simple boxes we tick. There's a whole range. We've got the big, obvious ones like joy or anger, but then there are the quieter feelings, the subtle shifts that make a character feel real. Trying to capture that full range is tough. We're looking at everything from intense excitement to a more mellow, relaxed vibe. It’s about more than just a smile or a frown; it's the whole package of how someone expresses what's going on inside.

Bridging Discrete Emotions and Continuous Affect

So, how do we actually make these characters show emotion? We can think of emotions as distinct categories, like 'happy' or 'sad'. But often, emotions are more like a sliding scale. A character might be a little bit happy, or really, really happy, or maybe happy but also a bit nervous. This is where bridging discrete emotions and continuous affect becomes so important for believable characters. We need models that can handle both the clear-cut feelings and the more fluid, blended emotional states. It’s like trying to paint a picture with only primary colors versus having a full palette – the latter gives you so much more depth.

Focusing on High and Mid Arousal

For a lot of the work being done, researchers are focusing on specific points on this emotional map. A lot of attention has gone into high arousal emotions, like happiness or excitement, because they tend to be more visually obvious. Think energetic gestures and a bright facial expression. Then there are mid-arousal states, like a calm or neutral feeling. These are important too, but they can be trickier to animate convincingly. Getting these right is key to making interactions feel natural, not robotic. It's a good starting point, but there's still a lot to explore, especially with less intense feelings. We're still figuring out how to make a character's subtle boredom or quiet contemplation feel just as real as their outright joy. It's a challenge that requires a lot of careful observation and technical skill.

Capturing the full spectrum of human emotion in digital characters is a complex task. It requires moving beyond simple expressions to represent the subtle nuances that make interactions feel genuine. The goal is to create virtual beings that don't just mimic emotion, but convincingly portray it in a way that audiences can connect with.

Evaluating Generative Models for Emotional Animation

When we talk about making 3D characters feel alive, especially in short dramas, how they express emotions is a big deal. We're looking at how well different computer programs, called generative models, can create these emotional animations. It's not just about making a character smile or frown; it's about making those expressions feel real and connected to what they're saying.

Speech-Driven Animation and Emotional Expression

Lots of these models take spoken words and turn them into animations. This is called speech-driven animation. The trick is getting them to add emotion to that. Some models just focus on making the mouth move correctly, which is called lip-sync. Others try to add body movements too. But the real challenge is making the character feel happy, sad, or angry through their animation. We're comparing different approaches to see which ones are best at adding that emotional layer. Some systems are getting pretty good at this, offering a broad spectrum of capabilities for generating expressive digital characters [1085].

Comparing Synthetic vs. Real Human Performance

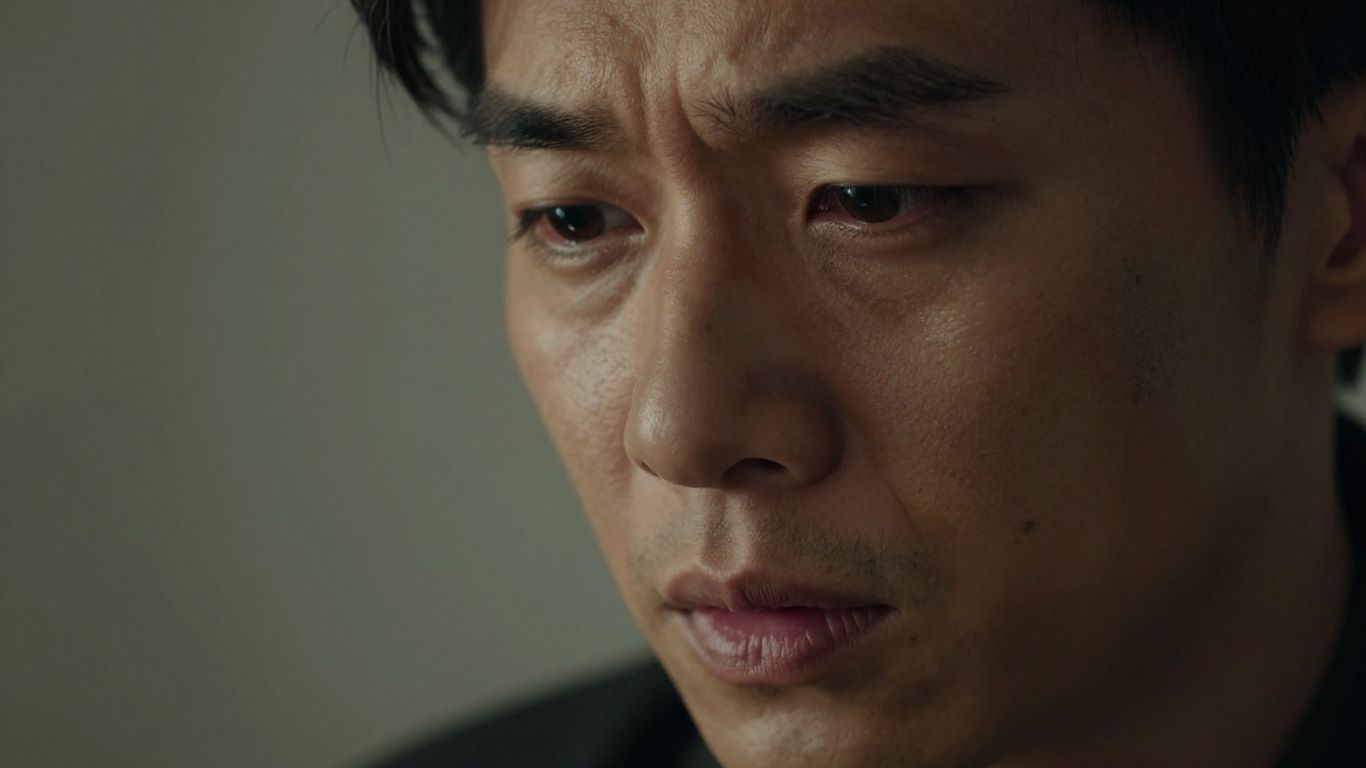

To really know if these computer-generated animations are any good, we need to compare them to actual humans. We looked at how well the generated animations matched up against recordings of real actors. It turns out, even the best computer models still have a way to go. While they can mimic expressions, the subtle nuances of a real person's performance are hard to replicate. For instance, when it comes to facial expressions, the real actor often got higher marks. However, the generative models did show promise in creating a good variety of animations.

Assessing Perceptual Impact in VR

Putting these animations into a virtual reality (VR) setting changes things. How we perceive an animation in VR is different from watching it on a flat screen. We asked people to interact with these animated characters in VR and tell us what they thought. We measured things like how realistic the emotions seemed, how natural the movements felt, and if they enjoyed the interaction. The goal is to understand the user's experience, not just the technical quality of the animation. It’s about whether the character feels present and believable. This kind of evaluation is key for creating truly immersive experiences in VR [a4fe].

Here's a quick look at what we measured:

- Realism: Did the emotions look genuine?

- Naturalness: Did the movements flow smoothly?

- Enjoyment: Was it pleasant to watch and interact with?

- Diversity: Did the character show a range of expressions?

- Interaction Quality: How well did the character respond and engage?

It's easy to get caught up in the technical details of animation generation, like frame rates or polygon counts. But when it comes to emotional storytelling, what really matters is how the audience feels about it. User feedback is the ultimate test.

User-Centric Metrics for 3D Short Drama

Realism and Naturalness of Emotional Portrayal

When we're watching a 3D drama, especially in VR, we want it to feel real, right? It's not just about whether the character's mouth moves with the words, but if their whole being conveys the emotion. We looked at how realistic and natural the emotional expressions seemed. This means checking if the facial movements and body language actually match the feelings being shown. For instance, a happy character should look genuinely happy, not just like they're going through the motions. We found that characters that were programmed to show emotions explicitly were easier for people to understand compared to those just trying to match speech. Interestingly, while characters showing happiness (a high-energy emotion) felt more real, those trying to show more subtle feelings, like a neutral state, didn't quite hit the mark. It seems current tech is better at big emotions than the small, everyday ones.

The Role of Enjoyment and Interaction Quality

Beyond just looking real, we also wanted to know if people enjoyed interacting with these characters and how good the overall interaction felt. This is where things got a bit tricky. Even when the animations looked pretty good, people didn't always rate the enjoyment or interaction quality very high. This suggests that just having good-looking animation isn't enough. We need to think about the whole experience. How does the character respond? Does it feel like a real conversation, or just a pre-programmed show? Getting this right is key to making VR dramas engaging.

Measuring Animation Diversity

Another thing we checked was how varied the animations were. If a character always makes the same gestures or facial expressions, it gets boring fast. We want characters that can show a range of emotions and movements. Thankfully, the systems we looked at did a decent job here, showing a good mix of animations. This is important because it helps make the characters feel more alive and less robotic. A diverse animation set means the character can react more appropriately to different situations in the drama.

Ultimately, judging these 3D dramas just by how technically perfect the animation is doesn't tell the whole story. We need to ask people how they feel about it – does it make them happy, sad, or just bored? That's the real test.

Technical Challenges and Future Directions

So, we've talked a lot about making these 3D characters feel real, right? But getting there isn't exactly a walk in the park. There are some pretty big hurdles we need to jump over. For starters, VR itself is a beast. Making things look good and run smoothly in a virtual space takes a ton of computing power. Streaming all that detailed 3D data without lag is a major headache.

Addressing VR Rendering and Streaming Demands

Think about it: you're in VR, and you want the character to look sharp and move without stuttering. That means rendering complex scenes in real-time, which is tough. Then there's the streaming part. Sending all that visual information to your headset needs to be super fast and reliable. If it's not, you get that yucky motion sickness, and nobody wants that. We're talking about needing better ways to compress and send data, and graphics cards that can handle way more. It's a constant race to keep up with what VR can do. The animation industry is always looking for new ways to do things, and VR is a big part of that [457d].

Improving Temporal Coherence in Reconstruction

Another tricky bit is making sure everything flows naturally over time. When we're generating animations, especially from things like speech, it's easy for movements to look jerky or out of sync. We need the character's expressions and body language to match what they're saying, not just at one moment, but continuously. This means our models need to be really good at predicting what comes next, keeping the motion smooth and believable from start to finish. It's like trying to draw a flipbook where every single page looks perfect and connected to the one before and after it.

Exploring Lower Arousal Emotional States

Most of the work so far has focused on big, obvious emotions – the happy, sad, angry ones. But humans have a whole range of feelings, including the quieter ones, like boredom, contemplation, or mild contentment. These lower arousal states are subtle and hard to capture. They often show up in small shifts in posture, breathing, or micro-expressions. Getting these right is key to making characters feel truly human, not just animated puppets. It requires a deeper look into how these nuanced feelings are expressed physically.

The quest for realistic virtual characters involves tackling significant technical issues. From the demands of VR rendering and data streaming to the subtle art of temporal coherence in animation and the nuanced expression of low-arousal emotions, each presents a complex puzzle. Solving these will bring us closer to truly believable digital beings.

We're still figuring out the best ways to measure and create these subtle emotional cues. It's not just about lip-syncing; it's about the whole package. The future of 3D animation is definitely looking towards more immersive and believable virtual experiences [1908].

The Impact of Explicit Emotion Modeling

Beyond Lip-Sync and Body Gestures

So, we've been talking a lot about making 3D characters move and talk realistically, right? A lot of the focus has been on getting the lip movements perfect and making sure the body gestures match the speech. That's important, no doubt. But what about the actual feeling behind the words? That's where explicit emotion modeling comes in. It's about going deeper than just making the mouth flap and the arms wave. We're talking about making the character feel happy, sad, or surprised in a way that audiences can actually pick up on.

Disentangling Speech Components for Emotion

Think about how we talk. It's not just the words themselves. There's the tone of voice, the speed, the pauses – all these things carry emotional weight. Explicit emotion modeling tries to break down speech into its core parts: the actual words (content), the emotional tone, and the overall style. This helps generative models understand how to express an emotion, not just what emotion to express. For example, saying "Great job!" can be genuinely happy or dripping with sarcasm. The model needs to figure out which one it is. This is a tricky business, and current methods are still working on getting it right. Some models try to isolate emotion from speech, but they often struggle with more than one emotion at a time.

- Content: The actual words being spoken.

- Emotion: The underlying feeling being conveyed (e.g., joy, anger, sadness).

- Style: How the speech is delivered (e.g., fast, slow, loud, soft).

Recognizing Emotions in 3D Short Drama

When we watch a 3D short drama, we're not just looking for perfect animation; we want to feel something. If a character is supposed to be happy, we need to see it in their eyes, their smile, their posture. If they're sad, we need to see that too. Models that explicitly focus on emotion tend to do a better job of this. In studies, when characters were animated with a focus on expressing happiness, people recognized that emotion more accurately than when the animation just focused on matching speech. It seems that really nailing the emotional expression makes a big difference in how believable the character feels. It's not just about looking good; it's about feeling real. This is especially true for high-arousal emotions like happiness, which tend to have more pronounced physical cues. Subtle emotions, on the other hand, are still a big challenge for these systems. We're seeing that models that can disentangle speech components for emotion are showing promise in creating more convincing characters, moving beyond simple lip-sync and body gestures.

The goal is to create characters that don't just mimic human speech but genuinely convey emotional states, making the audience connect with the story on a deeper level. This requires models that can interpret and generate nuanced emotional expressions, going beyond basic facial movements and body language to capture the subtle cues that define human affect.

It's a complex area, and researchers are constantly looking for ways to improve how these models handle emotion. The work on generating emotion semantic vectors is a good example of trying to find better ways to represent and control emotional expression in animation.

Immersive Experiences in Virtual Reality

Virtual reality (VR) really changes the game when it comes to how we experience stories. It's not just about watching something anymore; it's about being in it. When you put on a headset, you're transported to another place, and that feeling of presence can be incredibly powerful. This is especially true for short dramas where the goal is to make you feel something, to connect with the characters on a deeper level.

Social Presence in Human-Agent Interactions

One of the big things VR brings to the table is social presence. This is that feeling like you're actually there with someone, even if that 'someone' is a computer-generated character. The more realistic and natural the virtual character's expressions and movements are, the stronger that sense of connection becomes. It’s like the difference between looking at a picture of a friend and actually talking to them. When a virtual agent can mimic human behavior convincingly, it tricks our brains into believing we're in a real social situation. This is a huge step for things like virtual companions or even training simulations where you need to practice social skills. We're seeing a lot of work go into making these interactions feel less like talking to a robot and more like talking to a person. It’s a tricky balance, though, because even small glitches can break the illusion.

Physiological and Psychological Responses

Being immersed in VR can actually affect us physically and mentally. Studies have shown that when people feel present in a virtual environment, their heart rate might change, or they might feel a genuine sense of excitement or nervousness, much like they would in a real-world situation. This is because our brains are wired to react to what we perceive, and VR is getting really good at creating convincing perceptions. For example, if a virtual character looks distressed, you might feel a pang of empathy. This opens up possibilities for VR in therapy or for creating more impactful storytelling. It’s fascinating how our bodies and minds respond to these digital worlds.

Enhancing Conversational Realism

Making conversations with virtual characters feel real is a major hurdle. It's not just about the words they say, but how they say them – the tone of voice, the subtle facial expressions, the gestures. Generative models are getting better at producing animations that match speech, but there's still a gap. We want characters that can react naturally to what you say, maintain eye contact, and show a range of emotions without looking robotic.

Here’s a look at what makes a conversation feel real:

- Natural Speech Synchronization: The character's mouth movements should perfectly match their spoken words.

- Appropriate Non-Verbal Cues: This includes things like blinking, head nods, and subtle shifts in posture that convey engagement.

- Emotional Expressiveness: The ability to show a range of feelings through facial expressions and body language, not just a single default expression.

- Contextual Awareness: The character should seem to understand the flow of the conversation and respond accordingly.

Achieving this level of realism is key to making immersive drama truly compelling. It's about creating a believable digital actor that can hold your attention and make you forget you're interacting with a computer. The goal is to create experiences that are not just visually impressive but emotionally engaging, pushing the boundaries of virtual reality videos.

Wrapping It Up

So, we've looked at how these 3D characters can show feelings, like being happy or just neutral. It turns out that making characters seem genuinely happy is a bit easier for the tech right now than showing those quieter, more subtle emotions. While the computer-generated characters are getting pretty good, especially when they're supposed to be excited, they still have a ways to go to feel as real as a person. Things like how much fun it is to interact with them and how natural they seem still need work. It’s clear that just looking at the technical side of things isn't enough; we really need to think about how people feel when they're watching and talking to these virtual folks. This whole area is still growing, and it'll be interesting to see how they get better at showing the full range of human emotions in the future.

Frequently Asked Questions

What is the main goal of this study about 3D animated characters?

The main goal is to see how well computer programs can create 3D animated characters that show emotions, like happiness or feeling calm, during conversations in virtual reality (VR). We want to know if these computer-made emotions feel real and natural to people watching them.

Why is virtual reality (VR) important for this research?

VR makes the experience feel more real and helps us understand how people connect with these animated characters. It's like being there with them, which gives us a better idea of how believable the emotions seem compared to just watching a video.

How did you test if the emotions looked real?

We asked people to watch and interact with the 3D characters in VR. They then told us how real, natural, and enjoyable the animations were. We also checked if the characters' movements and expressions seemed consistent and if they could show different emotions clearly.

Did the computer programs do a good job showing emotions?

The programs were better at showing strong emotions, like happiness, than calmer or more subtle feelings. When characters showed happiness, people thought they looked more real and natural. However, showing less intense emotions was harder for the computer programs.

How do these computer-made animations compare to real people?

We compared the computer-generated animations to animations made from recording a real actor. While the computer programs could create good animations, the recordings of real actors often looked more realistic, especially for facial expressions. However, the recordings sometimes felt a bit stiff because they weren't perfectly smooth.

What are the next steps for making better emotional animations?

We need to improve how these programs create calmer emotions and make sure the animations are always smooth and consistent. We also want to explore showing more complex feelings and making the characters' reactions even more lifelike, especially in fast-paced VR experiences.